Sparse Virtual Textures

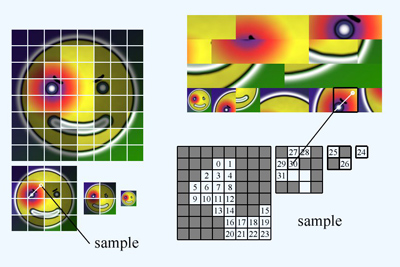

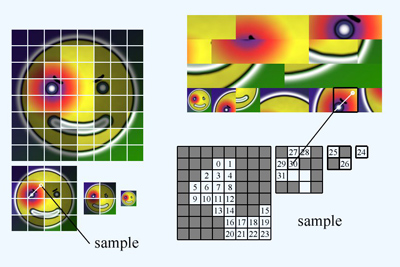

Sparse Virtual Texturing is an approach to simulating very large textures using much less texture memory than they'd require in full by downloading only the data that is needed, and using a pixel shader to map from the virtual large texture to the actual physical texture.

The technique could be used for very large textures, or simply for large quantities of smaller textures (by packing them into the large texture, or by using multiple page tables).

It was mostly inspired by John Carmack's descriptions of MegaTexturing in several public and private forums and emails. It may not be exactly the same as MegaTexture, but it's probably close.

They're also redundant to the software below, which incorporate the slides directly into the presentation, if you can get the software running.

Release 2: fixed some accidental dependencies on Nvidia GLSL features.

Look at readme.txt first.

All the source code is in the public domain. This is a research codebase, so I don't think you'll want to directly use the source code, rather than merely look at it, but it's PD just in case I'm wrong. The source code uses OpenGL, but requires Windows.

Similarly, it includes the executable, but it probably won't work. Different shader compilers produce different warnings/errors, and I didn't work on beating those down across all platforms, nor dealing with them nicely. Similarly, if it runs, it may not run well on various hardware because I'm doing dumb, naive things, since my goal wasn't max performance. I never actually even ran it outside the IDE (even when I gave the GDC talk), so it's possible that errors don't show up nicely outside it.

This file includes two versions of the talk written up in the text file, one substantially the same as the talk as I gave it, and one an earlier draft with a lot more text and a bunch of things I decided not to talk about. (Also a huge excursion into Virtual Memory--I originally tried to explain SVT by analogy to virtual memory, but realized it would be clearer to just start right up with SVT, as long as I had several million pictures to show what was going on.)

I don't have a good answer to the question (naively it requires changing where you're writing to, which APIs haven't supported generally although maybe it got added recently and I missed it). However, there may be good answers to another question, namely whatever it is that prompts you to think you want to do this.

For example, as I said at the talk, if you want to build your procedural texture pages by rendering into a texture, that's just fine. Just build them a block at a time, and no redirection is needed. I do it in software, but hardware is certainly feasible (and possibly more efficient), although you might run into texture memory issues if you do it by compositing bitmaps (which I think you want to, since you want to rely on your artists, not some crazy programmatic procedural shading).

If the reason you want to do it is to add decals on the fly, it's very simple. At some point you'll flush a given page out of the cache, and then later need to download it again, and it'll still need to have the decal. So make adding the decal be a procedural-texturing sort of process, even if the basic data is a disk-streamed bitmap; just always go through and every page, after you've built or streamed it, overlay any decals needed, on a page-by-page basis. Now, when you want to add a decal dynamically, you can just invalidate the cache and hey it just works (but less efficiently, since it rebuilds). Or, if you care about that efficiency, you can go through all the downloaded pages and if they intersect the decal, render the decal onto that page one at a time. You will need to clip the decal to each target page, and I don't know how much that state change (viewport/scissors) costs; I assume not that much. This is exactly how it would already work if you were doing the procedural build in hardware anyway; but it's all extra code if you do the procedural build in software.

If you have some OTHER reason to render into the SVT, I have no idea. But working a block at a time seems like the obvious solution, but maybe in other cases there would be too much geometry and this wouldn't be acceptable.

As I mentioned (I think), this is a tech demo. I'm not trying to ship a game with it, so there are obvious caveats about overgeneralizing from what I'm succeeding at to what is possible. (Hopefully id's tech5 is reasonable proof about what is possible.) I decided not to mention this at the time, because I didn't want to scare anyone off, but this is actually the first pixel shader I've ever written. I'm not really a rendering guy these days; I wrote the original SVT tech demo last year to keep my hand vaguely in that game. This means a few things. For one, I'm not really sure where the biggest performance issues are these days. I'm assuming you folks can take the idea and run with it to get highest performance. For example, I totally forgot that the reason everyone loves DXT compression isn't texture memory savings, but texture sampling performance (due to bandwidth/caching). So the page table textures can be big bloated floats just fine, since they're being repeatedly sampled so much, but packing the physical textures is crucial for performance. So mostly I'm just saying, if you felt like there were issues like this in the talk, sorry about that!

home : sean@silverspaceship.com